Basic Linguistic Concepts

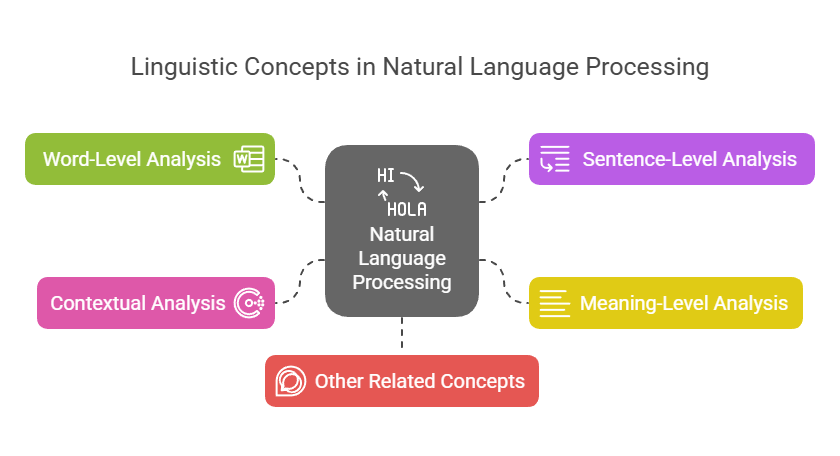

Natural Language Processing (NLP), which conventionally processes language through a number of phases that mimic linguistic differences, depends on an understanding of fundamental linguistic ideas. Fundamental concerns regarding language, such as what individuals say and what these things say, ask, or request about the outside world, are addressed by linguistics. Another important field of research is the communication structures used by language.

The following provides a thorough overview of the main linguistic ideas that are pertinent to NLP:

Word-Level Analysis (Morphology and Lexical Analysis)

- Words are regarded as the fundamental units of texts written in natural language. But figuring out what a “word” is sometimes be difficult. One way to think about a word is as a string in running text (like “delivers”) or as a more abstract entity called a lexeme that encompasses a group of related strings (like DELIVER names the set {delivers, deliver, delivering, delivered}).

- The process of tokenization, which involves recognizing words or tokens which in many languages entails segmenting text according to punctuation and whitespace often comes before other steps.

- The study of word structure is known as morphology. It is supposed that words are made up of morphemes, which are thought to be the smallest units of information. A stem and one or more affixes make up this internal structure. Prefixes, suffixes, circumfixes, and infixes can precede or surround the stem.

- The -ed suffix for past tense in English is an example of inflectional morphology, which adds gender, number, person, and tense. Derivative morphology alters a word’s category or meaning via affixes

- Parsing a word shows its basic form, affixes, etc. This approach must consider Turkish morphotactics, or morpheme organisation. A common method for morphological analysis is finite-state analysis.

- One of the most important phases in word-level text analysis is lexical analysis. Lemmatization, which links a word’s morphological variations to its lemma, is a fundamental job in lexical research. Usually, the lemma and its syntactic and semantic details are kept in a lemma dictionary. The ability to deal with words enables the creation of semantic and syntactic abstractions.

- Words having similar syntactic behaviour and frequently a characteristic semantic type are grouped together as Parts of Speech (POS), sometimes referred to as word categories, word classes, or lexical categories. The three main universal fundamental components of speech noun, verb, and adjective are widely accepted by linguists. The process of assigning the appropriate part of speech to each word in a phrase is known as POS tagging. One may think of this as a condensed version of morphological analysis.

- A computer-readable dictionary with lexical entries for every word and/or morpheme in a language, frequently incorporating syntactic and semantic information, is called a lexicon.

- Lexical, syntactic, semantic, pragmatic, and statistical idiomaticity describe multiword expressions (MWEs), which are essential to language. They possess characteristics that are difficult to infer from their constituent words.

Sentence-Level Analysis (Syntax)

- The study of syntax examines how sentences are put together and their internal organisation, or how words are combined to create phrases. A sentence is frequently regarded as the fundamental building block of meaning analysis.

- Analysing a string of words, usually a phrase, to ascertain its structural description in accordance with a formal grammar is known as syntactic analysis, or parsing. This establishes the sentence’s grammatical or syntactic structure. Syntactic analysis uncovers the underlying hierarchical structure of sentences, which are more than just linear word sequences.

- Grammar, word connections, and word groupings are all checked via syntax analysis. Its purpose is to reject phrases that are grammatically wrong.

- The structural descriptions of word strings are formally described by a grammar. A grammar, according to the generative tradition, is a collection of rules and vocabulary. According to generative grammar, all and only well-formed utterances may be produced by a natural language’s rules. One kind of formal grammar used in parsing is called Context-Free Grammars (CFGs).

- Constituency the idea that a group of words represents a unit in a given grammar and recognising the boundaries and grammatical functions (such as Subject, Complement) of these constituents are important ideas in syntax. The most crucial component of a sentence is its head. Another paradigm that focusses on word connections is dependency grammar, in which words are connected as dependent and head.

- A hierarchical syntactic structure appropriate for further processing, such semantic interpretation, is frequently produced by parsing. Syntax trees may be used to illustrate this structure, and data-driven methods to syntax can be found in resources such as treebanks, which are corpora annotated with parse trees, such as the Penn Treebank.

- Structural ambiguity, in which a single word sequence might represent several distinct syntactic structures, is another issue that syntactic analysis tackles.

Meaning-Level Analysis (Semantics)

- Language semantics is the study of meaning. It entails analysing word, phrase, sentence, and utterance meanings in context.

- Meaning representation is the focus of semantic analysis. It converts original utterances into some form of semantic metalanguage and mostly concentrates on the literal meaning of words, phrases, and sentences. Philosophical logic-influenced methods may concentrate just on truth-conditional meaning, but a thorough explanation necessitates a more thorough examination. Sentences like “hot ice-cream” that have contradicting literal meanings are usually ignored by a semantic analyser.

- Formal, lexical, and conceptual semantics are some of the levels at which semantic meaning may be explored. The study of word meaning is precisely known as lexical semantics. The source of a word’s meaning is its sense, and many words have more than one sense, making them polysemous. The process of determining an ambiguous word’s right meaning in context is known as word sense disambiguation. A kind of polysemy known as homonymy occurs when the senses are unrelated.

- The study of phrasal semantics examines how the syntactic combinations and individual word meanings are used to compose the meaning of phrases and sentences. This illustrates the Principle of Compositionality, which states that the syntactic organisation and meaning of the parts determine the meaning of the whole. Logic is frequently used to express compositional semantics.

- Relationships between word senses are described by sense relations. These include taxonomic relations such as hyponymy (is-a relation) and hypernymy (more comprehensive word), meronymy (part-whole relation), opposition (including antonymy, complementarity, conversity, and reversity), and synonymy (same meaning). Hierarchical classifications of word senses or domain knowledge are represented by taxonomies, or occasionally ontologies. WordNet is a huge collection of English lexical relations.

- Semantics relies on the predicate (usually the verb) and its arguments to determine the predicate-argument structure of a phrase. Arguments of predicates and their semantic relationship to the predicate are identified by semantic role labelling.

- The goal of semantic parsing is to transform sentences in natural language into formal representations of meaning.

Contextual Analysis (Pragmatics and Discourse)

- The use and comprehension of sentences in various contexts, as well as how context influences interpretation, are the focus of pragmatics. It necessitates practical knowledge and entails reinterpreting what was spoken in light of its true meaning. Together with semantics, pragmatics studies how words relate to the outside world.

- Discourse integration, also known as discourse analysis, examines how the statement that comes just before it might influence how the subsequent sentence is understood. It invokes the meaning of later phrases and is dependent on earlier ones. Cohesion and coherence, as well as coreference resolution the process of determining whether several phrases in a text relate to the same actual entity are important ideas. In contrast to sentential issues covered by syntax and semantics, discourse is frequently thought of as being concerned with the meaning of words or texts in context.

Other Related Concepts

- The branches of linguistics known as phonetics and phonology study the structure of sound systems and the actual sounds that make up language. They are crucial for voice recognition and synthesis, even if they are not often at the heart of text-based NLP. Phonology describes how pronunciation may also result in word structure limitations.

- The rules for writing a language are known as orthography.

From the fundamental units of words to the intricate meaning of phrases and texts in context, these linguistic notions offer the theoretical foundation and framework that conventional NLP systems employ to process and comprehend human language.