Rule based vs statistical NLP natural language processing.

NLP in statistics

- In recent years, the use of statistics in natural language processing (NLP) has grown in significance.

- It entails processing language using quantitative techniques, mostly drawn from linear algebra, information theory, and probability theory.

- Machine learning, which focusses on learning from data to extract information, find patterns, predict missing information, or create probabilistic models, is the primary source of statistical methods.

- This method emphasises what may be discovered by monitoring language data and takes an empiricist approach to language processing.

- By giving linguistic events probabilities, statistical natural language processing (NLP) enables systems to identify which phrases are “usual” or “unusual.” The associations and preferences present in the entirety of language use are of interest to practitioners.

- Linear modelling approaches to supervised learning, such as Perceptrons, linear Support Vector Machines, and Logistic Regression, trained on high-dimensional feature vectors, have dominated core NLP techniques during the last ten years. Additionally, naive Bayes classifiers are employed.

- Because statistical approaches are stable, generalise well, and behave gracefully in the face of errors and new data, they are thought to be a good answer to the problem of ambiguity in language.

- The amount of human labour required to create NLP systems is decreased since statistical model parameters are frequently automatically derived from text corpora.

- Statistical natural language processing (NLP) techniques include language modelling with N-grams, discriminative models like Conditional Random Fields (CRFs) for POS tagging and sequence prediction, Probabilistic Context-Free Grammars (PCFGs) for statistical parsing, and Hidden Markov Models (HMMs) for sequence prediction tasks like POS tagging.

- The main purpose of statistical parsing techniques, which are based on statistical inference from natural language text samples, is disambiguation choosing the best analysis from a range of options allowed by a grammar. Extended concepts of word and category collocation are frequently used by statistical parsers as a stand-in for semantic and world information in order to disambiguate when parsing.

- Applications such as information retrieval, speech recognition, and machine translation frequently use statistical methods.

Rule-Based Natural Language Processing

- Handcrafted rules based on linguistic understanding are the foundation of rule-based techniques.

- Logic, rules, and ontologies served as the foundation for the early symbolic attempts at language processing.

- Rule-based systems may work well in certain domains or for particular activities. For instance, in a language with intricate morphology, handwritten rules can be rather helpful.

- Handcrafted rules, such as the one that says that words ending in “-ing” are usually gerunds, are used by rule-based taggers to assign tags based on linguistic patterns.

- Rule-based chatbots react to user input by following preset patterns and rules.

- These methods frequently entail hand-tuning and rule development.

- Both the Word and Paradigm (W&P) and Inflection and Arrangement (I&A) approaches to morphological analysis rely heavily on rules. For tasks like POS tagging, language rules or state transitions can be expressed using transducers and finite state machines.

- Manual rule-creation-based disambiguation techniques may have a knowledge acquisition bottleneck and perform poorly on natural text.

- Although ambiguity exists in rule-based morphological analysis, it may need to be resolved in a broad context where probabilistic information is less significant. In statistical analysis of syntax, where a single sentence may have multiple candidate parse trees, ambiguity is less significant than in morphological analysis.

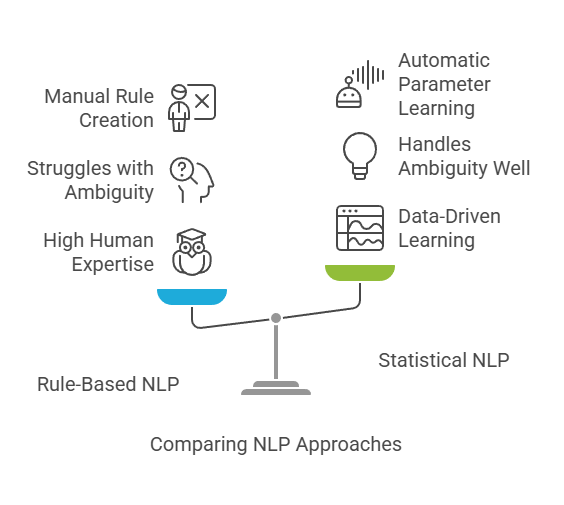

Rule based vs statistical NLP

Basis: While rule-based NLP depends on manually created linguistic rules and symbolic representations, statistical NLP is based on quantitative techniques and data-driven learning.

Data Dependency: To estimate model parameters, statistical natural language processing (NLP) needs corpora. Although rule-based NLP relies less on vast amounts of data for its fundamental reasoning, defining rules necessitates a high level of human linguistic proficiency.

Handling Ambiguity: By identifying patterns and preferences in data and employing probability distributions to evaluate options, statistical approaches are excellent at managing the inherent ambiguity and unpredictability of natural language. Uncertainty and unpredictability not specifically addressed by rules can be problematic for rule-based systems.

Development: In contrast to the knowledge acquisition bottleneck frequently connected to manual rule creation and tuning in rule-based systems, statistical natural language processing (NLP) enables automatic parameter learning, minimising manual work.

Contemporary NLP: It’s getting harder to distinguish between rule-based and statistical approaches. Modern systems sometimes use hybrid approaches, which combine rule-based or linguistic elements with statistical or machine learning techniques. For instance, statistical systems may include linguistic rules pertaining to syntax and semantics, whereas rule-based systems may rank rules according to statistics. Deep learning architectures are based on statistical underpinnings and represent the state-of-the-art for many tasks.