Advantages of multilayer perceptron

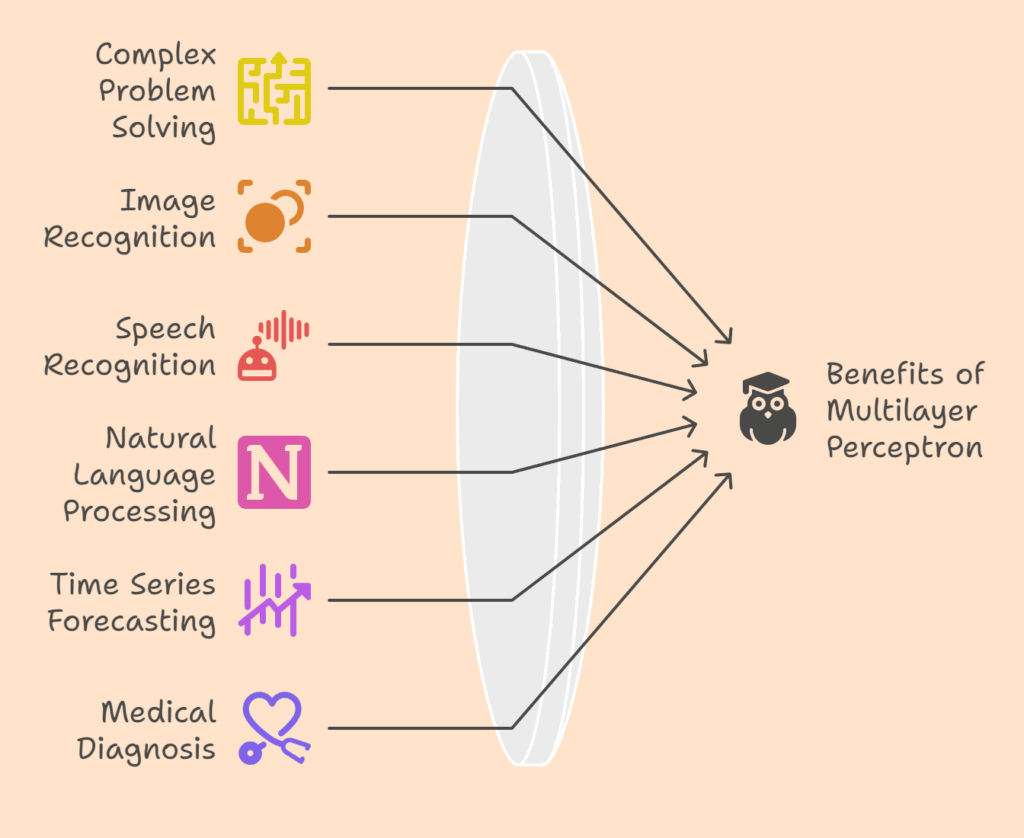

MLPs are multipurpose instruments with numerous uses:

- Complex Problem Solving: They can recognize complex patterns and forecast outcomes accurately in a variety of fields. The ability of MLPs to create intricate decision boundaries is helpful for issues such as the XOR problem.

- Classifying photos into distinct categories, such as dogs or cats, is known as image recognition. By using features that have been taken out of images as input, they are used in image processing.

- Speech recognition converts speech to writing.

- Machine translation, sentiment analysis, and text interpretation are examples of natural language processing (NLP).

- Time series forecasting is the process of estimating future values using historical data.

- Forecasting Models: Used to create forecasting models, such as one that uses food prices to predict the consumer price index.

- Medical Diagnosis: Used to diagnose conditions like breast cancer by deciphering complex picture patterns.

- Fault Detection: Used to find problems in wind turbines and other systems.

- Categorization: Diseases of rice leaves and general categorization issues are categorized.

Disadvantages of multilayer perceptron

Notwithstanding their potential, MLPs face a number of disadvantages:

Computational Cost

When dealing with complicated structures or huge datasets, training MLPs can be computationally costly.

Hyperparameter tuning

It frequently takes a great deal of trial and error to get the ideal number of hidden layers, neurons, and activation functions. Overtraining can result from having too many neurons, whereas generalization abilities can be impacted by having too few.

Vanishing Gradient Problem

These issues, which call for specialized techniques to resolve, can arise with deeper layers.

Overfitting

When a model learns the training data including noise too well and is unable to generalize to new, unknown data, this is known as overfitting.

Prevention Strategies

Increasing the number of training samples, employing early stopping, which tracks performance on a validation set and halts training when error on this set stops improving, and using cross-validation techniques to determine optimal hyperparameter values and when to stop training are all ways to reduce overfitting. Dropout and other regularization strategies aid in avoiding overfitting.

Underfitting

This happens when the model’s complexity is less than ideal, which results in a lack of discrimination power.

Sluggish Convergence

Particularly for complicated problems, training can be quite sluggish and take many epochs.

Complexity Control

It’s important to reach the ideal MLP complexity because going above it causes overfitting and going below it causes underfitting. Complexity can be managed with the aid of strategies such as network structure (committee machines), training methods (early halting, cross-validation), and data pretreatment (feature extraction, selection, reduction).

Setting up Sensitivity

To keep the model from becoming trapped in local minima and to guarantee effective training, weights and biases must be initialized correctly.

Also Read About Feedforward networks in Neural Network and its Types

Multilayer Perceptron Implementation

A number of things should be taken into account when creating a successful MLP in order to maximize performance and steer clear of frequent problems:

- Model Architecture: Deeper networks are better for complex tasks; the number of hidden layers and neurones should be determined by the complexity of the challenge.

- Data Preprocessing: To improve accuracy and expedite training, clean and normalize input data (e.g., scale values between 0 and 1).

- Initialization of Weights and Biases: To guarantee effective learning and prevent local minima, initialize weights and biases at random.

- Hyperparameter Experimentation: To determine the optimal setup, try various combinations of learning rate, batch size, and epoch count.

- Training Duration and Early Stopping: When performance on a validation set begins to deteriorate, employ early stopping to avoid overfitting and keep an eye on the model’s performance during training.

- Optimization Algorithm Selection: Choose an optimizer that is suitable for numerous MLP applications, such as Adam or SGD.

- Preventing Overfitting: To stop the model from learning training data and promote generalized patterns, use regularization strategies like dropout.

- Model Evaluation and Metrics: To obtain thorough insights, assess the model’s performance using metrics such as accuracy, precision, and recall.

- Iterative Refinement: To guarantee continuous progress, modify the model’s architecture or hyperparameters on a regular basis in response to evaluation results.

MLPs are crucial to comprehending the topic of machine learning since they serve as the basis for many sophisticated deep learning models.

Also Read About Convolutional Neural Networks Architecture and Advantages of CNNs

Convolutional neural network vs multilayer perceptron

| Feature | Convolutional Neural Network (CNN) | Multilayer Perceptron (MLP) |

|---|---|---|

| Architecture | Includes convolutional, pooling, and fully connected layers | Composed only of fully connected (dense) layers |

| Input Type | Primarily works with grid-like data (e.g., images, video) | Works with flattened vector input |

| Feature Extraction | Automatically extracts spatial features via convolution layers | Requires manual feature extraction or flattening of data |

| Parameter Sharing | Yes-same filters (kernels) applied across input | No-each weight is unique for each neuron |

| Local Connectivity | Yes-neurons connect only to local regions in input | No-every neuron connects to every input |

| Computational Efficiency | More efficient for image and spatial data | Less efficient with large input data like images |

| Performance on Images | Very high-state-of-the-art in computer vision tasks | Poor performance unless features are carefully engineered |

| Overfitting | Lower due to fewer parameters and use of pooling | Higher risk due to dense connections and many parameters |

| Interpretability | Features learned can sometimes be visualized (e.g., filters) | Harder to interpret what hidden layers learn |

| Common Applications | Image classification, object detection, medical imaging | Tabular data, basic classification/regression tasks |

Also Read About What Is Single Layer Perceptron Neural network Architecture