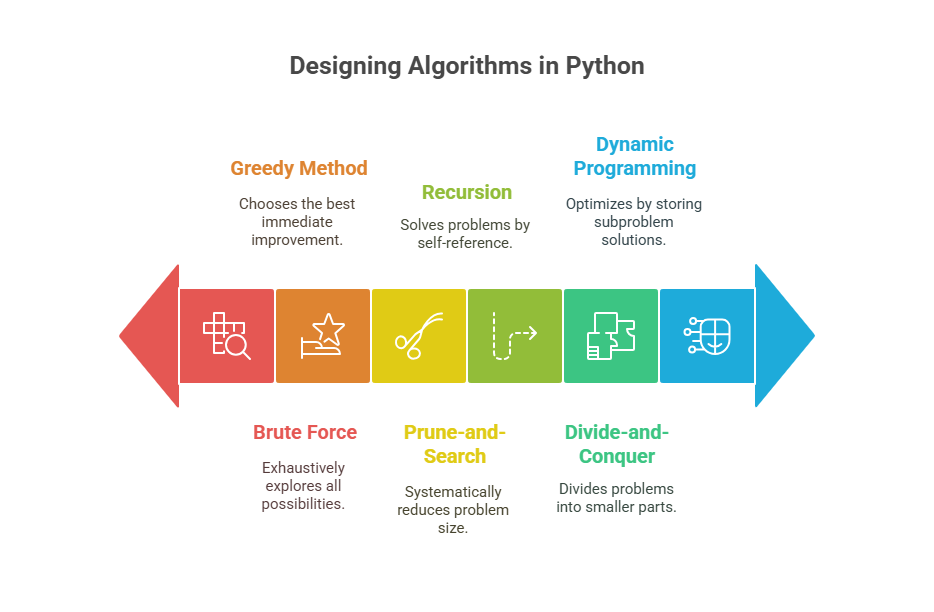

Designing Algorithms in Python

A methodical process for completing a task in a limited amount of time is called an algorithm. Two essential elements in computer science and engineering are data structures and algorithms, the latter of which are methodical processes for resolving issues. Developing effective programs requires an understanding of algorithm design.

Various algorithm design techniques aid in problem solving and can produce more concise and readable answers. Performance is greatly impacted by the algorithm design technique used, particularly in real-time systems.

The following are some methods for creating algorithms that have been discussed:

Recursion

Explanation

Recursion is a crucial algorithmic design pattern that defines a function in terms of itself. A recursive step that advances towards a base case by making one or more recursive calls is usually included, along with one or more base cases that do not employ recursion. By eliminating intricate case studies and nested loops, recursion enables the concise exploitation of repeated structures in problems, resulting in more accessible algorithm descriptions.

It has a price, too, because the Python interpreter needs to keep track of activation records for every nested call, which uses memory. Recursion should often be saved for quick algorithms and avoided for those with quadratic or more running time inadequacies, since this might result in numerous method calls that cause stack overflow errors or performance problems.

Recursion Types:

Linear recursion:Each function body invocation performs only one recursive call in linear recursion. The recursion trace appears as a single call sequence. The excellent Fibonacci function and factorial function compute n! via linear recursion. Despite its “binary” name, the binary search algorithm follows only one recursive branch during execution, making it linear recursion.

Binary recursion: Binary recursion algorithms often divide the problem size in half at each step, resulting in a recursion depth of O(log n) and memory usage of O(log n), but their total running time can still be O(n) due to the cumulative constant-time work across all function calls. The binary sum algorithm calls 2n-1 functions.

Multiple recursion: A function can initiate three or more recursive calls from a single body invocation in multiple recursion. This differentiates from linear and binary recursion (one or two calls).

Factorial Function: A classic mathematical function with a naturally recursive definition is the factororial function. The recursive aspect of an iterative solution makes it a good example for illustrating recursion, even if it is also easy and avoids the overhead of recursion.

Binary Search: An illustration of linear recursion, this algorithm executes in O(logn) time. By periodically halving the search interval, it effectively searches a sorted sequence. The target and the centre element are compared by the algorithm; if they don’t match, the search moves on to the half where the target might be.

Divide-and-Conquer

Explanation

In order to solve an issue, this algorithmic design pattern recursively divides it into two or more discrete subproblems. There are three steps in the pattern.

Divide: Solve the issue immediately if the input size is less than a predetermined threshold. Otherwise, create two or more disjoint subgroups from the supplied data.

Conquer: The divide-and-conquer technique, “Conquer” means recursively solving subproblems. This method divides an issue into related subproblems. If the input size is below a threshold, the problem is addressed directly; otherwise, disjoint subgroups are created.

Combine: In the divide-and-conquer algorithmic design pattern, “Combine” is the third phase. The three primary stages of this design are usually Divide, Conquer, and Combine. In order to create a comprehensive solution for the initial problem, the Combine step aims to combine the answers produced from the subproblems during the “Conquer” phase.

Algorithms Using This Approach: Two well-known algorithms that employ the divide-and-conquer strategy for sorting are merge-sort and quick-sort. These sorting algorithms usually have Python implementations.

Example:

def merge_sort(arr):

"""

Sorts a list using the Merge Sort algorithm, which is a classic

example of the Divide-and-Conquer paradigm.

The algorithm works in three main steps:

1. Divide: Recursively divide the unsorted list into two halves

until each sublist contains only one element (which is by definition sorted).

2. Conquer: Recursively sort each of those sublists.

3. Combine: Merge the sorted sublists back together to form a single sorted list.

"""

# Base case: If the list has 0 or 1 element, it's already sorted.

if len(arr) <= 1:

return arr

# --- 1. Divide ---

# Find the middle point of the list.

mid = len(arr) // 2

# Create two sublists: left_half and right_half.

left_half = arr[:mid]

right_half = arr[mid:]

print(f" Dividing: Left Half = {left_half}, Right Half = {right_half}")

# --- 2. Conquer ---

# Recursively call merge_sort on each half.

# This step will continue dividing until base cases (single elements) are reached,

# and then it will start returning sorted sublists.

left_sorted = merge_sort(left_half)

right_sorted = merge_sort(right_half)

# --- 3. Combine ---

# Merge the two sorted halves back into a single sorted list.

return merge(left_sorted, right_sorted)

def merge(left, right):

"""

Helper function for merge_sort.

Merges two already sorted lists into a single sorted list.

"""

merged_list = []

i = j = 0 # Pointers for left and right lists

# Compare elements from both lists and add the smaller one to merged_list

while i < len(left) and j < len(right):

if left[i] < right[j]:

merged_list.append(left[i])

i += 1

else:

merged_list.append(right[j])

j += 1

# Add any remaining elements from the left list (if any)

while i < len(left):

merged_list.append(left[i])

i += 1

# Add any remaining elements from the right list (if any)

while j < len(right):

merged_list.append(right[j])

j += 1

print(f" Merging: {left} and {right} into {merged_list}")

return merged_list

# --- Example Usage ---

if _name_ == "_main_":

unsorted_data = [38, 27, 43, 3, 9, 82, 10, 1]

print(f"Original Unsorted List: {unsorted_data}\n")

sorted_data = merge_sort(unsorted_data)

print(f"\nFinal Sorted List: {sorted_data}")Output:

Original Unsorted List: [38, 27, 43, 3, 9, 82, 10, 1]

Dividing: Left Half = [38, 27, 43, 3], Right Half = [9, 82, 10, 1]

Dividing: Left Half = [38, 27], Right Half = [43, 3]

Dividing: Left Half = [38], Right Half = [27]

Merging: [38] and [27] into [27, 38]

Dividing: Left Half = [43], Right Half = [3]

Merging: [43] and [3] into [3, 43]

Merging: [27, 38] and [3, 43] into [3, 27, 38, 43]

Dividing: Left Half = [9, 82], Right Half = [10, 1]

Dividing: Left Half = [9], Right Half = [82]

Merging: [9] and [82] into [9, 82]

Dividing: Left Half = [10], Right Half = [1]

Merging: [10] and [1] into [1, 10]

Merging: [9, 82] and [1, 10] into [1, 9, 10, 82]

Merging: [3, 27, 38, 43] and [1, 9, 10, 82] into [1, 3, 9, 10, 27, 38, 43, 82]

Final Sorted List: [1, 3, 9, 10, 27, 38, 43, 82]Dynamic Programming

Explanation

Dynamic programming is an approach for designing algorithms that frequently converts exponentially time-consuming problems into polynomial-time procedures. Though it is usually used for optimisation problems where solutions to subproblems are saved to prevent recomputation, it is comparable to divide-and-conquer. The resulting algorithms are frequently straightforward and frequently use nested loops to populate a table.

Simple Subproblems: It is possible to continually divide the global optimisation problem into more manageable subproblems. With a few indices, these subproblems ought to be parameterizable.

Subproblem Optimisation: The best solution to a problem must be made up of the best solutions to its smaller issues. Another name for this is the optimum substructure.

Subproblem Overlap: Common subproblems can be optimal solutions to unrelated subproblems. This overlap is important because it prevents duplicate computations by allowing the solution to a subproblem to be computed and stored once, allowing it to be utilised repeatedly.

Greedy Method

Explanation

The greedy technique is an algorithmic design pattern that is used to solve optimisation problems in which maximising or minimising a structure’s property is the aim. Starting with an initial situation, the method entails making a series of decisions and iteratively selecting the one that results in the greatest immediate cost improvement. An ideal answer for every issue is not always guaranteed by this approach.

Huffman Coding Algorithm: Another example of the greedy method in action is the Huffman Coding Algorithm, which creates an optimal encoding.

Minimum Spanning Tree (MST) Algorithms: A tree that connects every vertex in a given linked graph while minimising the sum of the edge weights is known as a Minimum Spanning Tree (MST), and it is a key idea in graph theory. It operates as a tree-like spanning subgraph. This data format is very helpful for situations like figuring out how much cable is required to link every computer in an office building, when the objective is to connect every component of a network or system.

Brute Force

Explanation

Usually, it works by listing every potential configuration of the inputs involved and then choosing the optimal one. The brute-force approach in pattern-matching algorithms is methodically examining each possible location for a pattern (P) in a given text (T). Although it takes a simple approach, in the worst situation, this method is frequently ineffective. The worst-case running time for pattern matching, for example, is O(nm), where ‘n’ is the text length and’m’ is the pattern length.

This is due to the fact that it may do up to’m’ character comparisons for every possible starting place in the text. This method’s major inefficiency, especially when compared to algorithms like Knuth-Morris-Pratt, is that when a mismatch occurs, it restarts the comparison process from the next incremental spot of the pattern, discarding all information gathered from successful character comparisons.

Application: A brute-force method is frequently used in a rudimentary implementation of pattern matching, which entails locating a string pattern within a bigger text. In the worst scenario, this method runs in O(mn) time.

Prune-and-Search

Explanation:

The Prune-and-Search algorithmic design pattern, sometimes referred to as decrease-and-conquer, is a method for gradually lowering the problem size in order to solve problems on a set of n items. This entails solving the smaller problem recursively after removing a portion of the n objects. A brute-force approach is used to solve the problem directly after it has been reduced to a collection of items of a consistent size. In certain situations, the reduction process might be repeated until a brute-force approach is used in place of recursion. As an illustration of this design pattern, the binary search algorithm is mentioned, and it is seen that the selection problem may be resolved using this method in O(n) time.

Application: The prune-and-search design pattern is exemplified by the binary search method, which is also an example of linear recursion.

Understanding the features and trade-offs of these design methods is essential for creating software that is efficient. They offer several frameworks for creating algorithms.