Kubernetes underpins modern container orchestration in cloud-native technology’s rapid evolution. Even experienced developers typically ignore the Kubernetes API server, which powers the system. The API server is the central administration entity and “frontend” of the Kubernetes control plane, handling all cluster and external communication.

The Gatekeeper of Cluster State

The API server is fundamentally a center for communication. Every contact must go through this crucial interface, whether an automated system is scaling a workload or an administrator is using a command-line tool to launch a new application. Users, internal components, and tools like kubectl may all communicate with the cluster easily thanks to its RESTful API interface.

Being the only point of entry to the cluster’s state is arguably its most important function. Kubernetes stores the “truth” of the cluster what pods should be executing, which services are active, and what configurations are set in etcd, a key-value store. Pods and Services can only be read and written by the API server directly with etcd. Because of this centralized control, state changes are managed securely and consistently.

You can also read What is the Control Plane in Kubernetes?

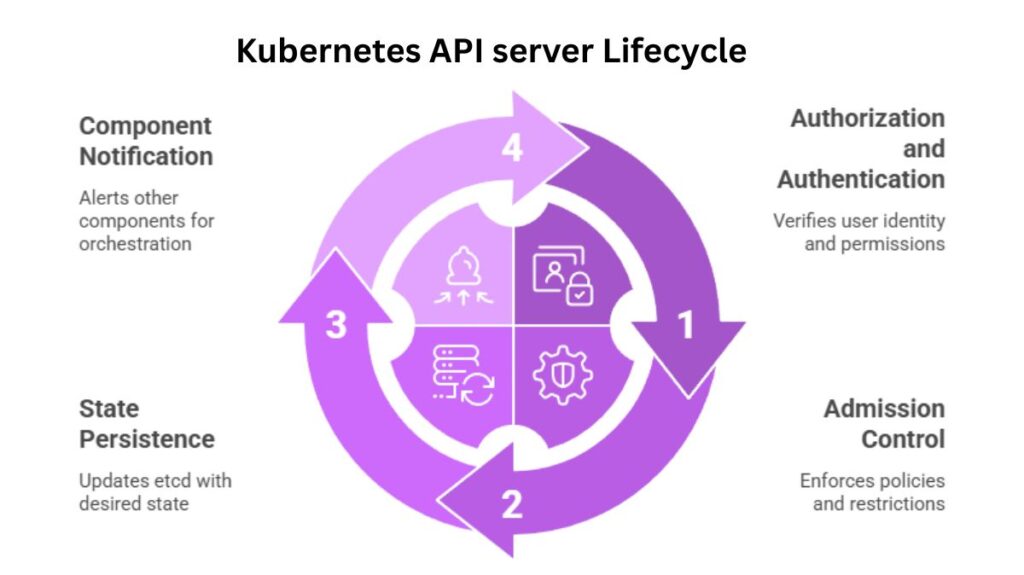

Kubernetes API server Lifecycle

Every transaction in the API server incorporates security; it is not an afterthought. The server starts a multi-stage procedure to make sure a request is safe and valid as it comes in, for as when a developer tries to build a new pod.

- Authorization and Authentication: The server initially confirms the user’s or service account’s identity. Once identified, it verifies if the requester has the required permissions using tools like Role-Based Access Control (RBAC). For instance, a cluster administrator is given extensive, cluster-wide authority, whereas a developer may be limited to a particular namespace.

- Admission Control: The request needs to go via admission controllers even if the user is allowed. According to corporate policies, these specialized modules have the ability to intercept, alter, or even refuse requests. They guarantee that a single deployment doesn’t use up all of the cluster’s resources by enforcing crucial restrictions like resource limitations and security standards.

- State Persistence: The API server modifies the etcd store to reflect the new desired state after the request has been verified and accepted.

- Component Notification: This is the “orchestration” process. Instead of physically building pods, the API server uses “watch” activities to alert other control plane components, such as the kube-scheduler and kube-controller-manager. The necessary steps are then taken by these components to bring the cluster’s current state into line with the new desired state that is documented in etcd.

A Platform Built for Growth

Its versatility has helped Kubernetes explode in popularity. No need to change the Kubernetes codebase the API server lets developers add unique resource types and capabilities. Organizations can build their own API objects using Custom Resource Definitions (CRDs) and an aggregation layer, allowing Kubernetes to handle complex and distinct workloads that are suited to particular business requirements.

This power is easy to access through interfaces. Developers can directly ask the REST API over HTTP, however most use kubectl. This tool simplifies REST calls into single commands and manages authentication using kubeconfig. Applications operating within the cluster can find the API server and authenticate using service account tokens thanks to official client libraries in languages like Go, Python, and Java.

You can also read What is the Importance of Kubernetes & Why Kubernetes?

The High Stakes of API Management

API servers are important, so Site Reliability Engineers worry about them. Its downtime can cripple all activities as the cluster’s gateway. This requires high-availability (HA) solutions like multi-instance setups to keep the control plane running if one instance fails.

Additionally, the API server must scale massively as clusters grow. It could be necessary to handle thousands of requests per second in high-traffic settings without adding delays. Admission controllers, for example, might add latency and performance costs, making this a complex balancing act.

From an operational standpoint, the API server is the main monitoring and debugging source. Prometheus and Grafana use API server metrics and logs to detect faults and maintain cluster health.

You can also read What is Kubernetes Architecture, Features of K8s

Conclusion

The intelligent core of the container orchestration industry, the Kubernetes API server is much more than just an access point. It manages state, enforces security, and facilitates component connectivity to enable modern enterprise automation and scalability. As enterprises push cloud-native application limits, API server expertise and optimization are essential.

You can also read What is Container Orchestration in Kubernetes?

Where is the kube API server?

The control plane, or master node, of a Kubernetes cluster is where the Kubernetes API server operates. It is the main component that manages all user requests, CLI tools like kubectl, and other cluster components. It also exposes the Kubernetes API. In the majority of configurations, it operates on the control plane node as a containerized service (usually as a static pod), usually listening on port 6443. The cloud provider abstracts and controls its location in managed Kubernetes services.

Does Kubernetes have an API?

Yes, the Kubernetes API is a strong and central application programming interface. It is the fundamental element that enables communication between users, tools, and system components and the cluster. Resources like pods, services, and deployments can be created, updated, deleted, and managed using this API. It may be accessed using the kube-apiserver and utilized with client libraries, REST requests, and kubectl. Automation, orchestration, and cluster management all depend on the API, which adheres to REST standards.

Is kube API server a pod?

Depending on how the cluster is configured, the Kubernetes API server may indeed operate as a pod.

The kube-apiserver operates as a static Pod on the control plane node in the majority of production clusters built with programs like kubeadm. This indicates that the kubelet, not the Kubernetes scheduler, is in charge of managing it directly.

It might, however, function as a system process in specific configurations (such as managed services or bespoke deployments).

Thus, although it is frequently a pod, it need not be one.