An open-source system called Kubernetes, sometimes shortened to K8s, is made for the automated deployment, scaling, and administration of containerized applications. It was first created by Google developers using their in-house “Borg” system. After being contributed to the Cloud Native Computing Foundation (CNCF), it has subsequently evolved into the industry standard for container orchestration. The word itself comes from the Greek word for “helmsman” or “pilot,” indicating its function in managing intricate application settings throughout dispersed infrastructure.

Understanding the Necessity of Orchestration

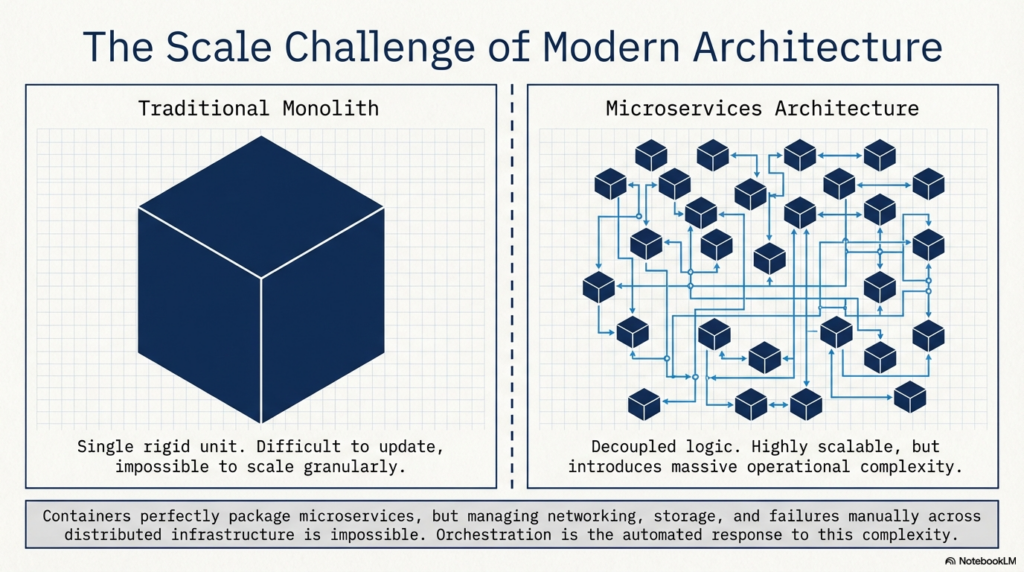

A significant scaling difficulty resulted from the transition from conventional monolithic apps to microservices. Modern software is divided into hundreds or thousands of discrete, independent containerized components rather than a single huge program. Although containers effectively encapsulate code and dependencies, it is practically impossible to manage them manually at scale, including monitoring networking, storage, failures, and updates.

The automated technique of managing these numerous containers across different hosts is called container orchestration. By managing the “toil” of infrastructure, it guarantees that each service operates on the appropriate cluster nodes at the appropriate time, freeing developers to concentrate on improving applications rather than underlying hardware.

The Core Architecture of Kubernetes

Kubernetes uses a complex design that is separated between worker nodes and a control plane.

- The Control Plane (The “Brain”): The cluster’s “desired state” is maintained by the Control Plane, sometimes known as the “Brain” layer. It consists of the controller manager, which keeps an eye on the cluster to make sure the present state matches the intended state, etcd, a highly available key-value store for cluster data, and the API server, which serves as the main hub for all connections. To determine where particular containers should run, the scheduler evaluates resource availability and workload requirements.

- Worker Nodes: The physical or virtual machines that carry out the real application workloads are known as worker nodes. Every node has a kubelet, a kube-proxy for managing network traffic, and an agent that interacts with the control plane to guarantee containers are operating in their designated pods.

- Pods and Services: Usually housing a single container, a pod is the smallest deployable unit in Kubernetes. In order for pods to successfully connect with one another even when individual pods are formed or destroyed, services offer an abstraction layer that provides reliable network endpoints (IPs and DNS names).

Key Features and Capabilities

Kubernetes is essential for contemporary enterprise workloads since it offers a number of automated services.

- Self-Healing: Kubernetes automatically restarts a container in the event of a crash. The orchestrator reschedules those workloads onto other cluster nodes that are in good condition if a node fails.

- Automated Scaling: To maximize resource utilization, Kubernetes can spin up additional container instances during periods of high demand and decrease them during periods of low traffic by using the Horizontal Pod Autoscaler.

- Load balancing: To avoid bottlenecks and guarantee high availability, Kubernetes divides incoming network traffic equally among several instances of a program.

- Declarative Configuration: Users specify a “desired end state” in configuration files (YAML or JSON) instead of providing detailed instructions (imperative mode). After then, Kubernetes continuously works in a reconciliation loop to get to and stay in that state.

- Service Discovery and Storage Orchestration: It enables containers to locate one another without the need for manual network configuration and mounts storage systems (local or cloud) automatically.

- Automated Rollouts and Rollbacks: Kubernetes ensures minimal downtime by managing application updates at a regulated rate. It can automatically go back to a prior stable version in the event that an update fails.

The Orchestration Workflow in Practice

Several methodical phases are often involved in the process of deploying an application to Kubernetes:

- Configuration: In a manifest file, developers specify the resource limitations, replicas, and other needs for the application.

- Submission: The Kubernetes API server receives the file.

- Scheduling: The scheduler finds the nodes that are most appropriate for the application pods.

- Execution: The application is launched after the kubelet on the chosen nodes retrieves the required container images.

- Maintenance: To make sure the application is stable, Kubernetes regularly runs health checks (liveness and readiness probes).

Enterprise Challenges and Management Platforms

Despite Kubernetes’ strength, scaling it up presents challenges including security governance, multitenancy, and cluster lifecycle management. “Vanilla” Kubernetes is insufficient for many businesses to manage dozens of clusters in various environments.

As a result, Kubernetes orchestration systems such as Mirantis Kubernetes Engine (MKE) and SUSE Rancher Prime have become popular. These platforms offer a “single pane of glass” for managing several clusters, centralizing security policies (RBAC), and streamlining daily tasks. SUSE Rancher Prime, for example, provides a unified web-based interface that abstracts the complexities of setting up and managing clusters in the public cloud or on-premises. In a similar vein, Mirantis concentrates on offering a production-ready environment that incorporates cutting-edge security measures and simplifies automation.

Conclusion

Kubernetes has revolutionized software development by giving cloud-native apps a scalable, robust, and portable base. Organizations may lower operational expenses, increase uptime through self-healing, and shorten application delivery cycles by automating the administration of distributed systems. Kubernetes orchestration continues to be the cornerstone of contemporary digital transformation, whether it is implemented on a single local cluster for development or over a global hybrid cloud for a large organization. It will continue to develop alongside new technologies like edge computing and AI-driven workloads because to its extensive ecosystem and community support.