Cloud Clusters in Kubernetes

Cluster computing, which is characterized as a group of closely or loosely connected computers that cooperate to operate as a single, integrated entity, is a revolutionary approach to digital infrastructure. Clusters enable businesses to carry out complicated activities across a distributed system by linking several machines, usually via high-speed local area networks (LANs). This essentially creates the illusion of a single powerful computer. This design serves as the basis for contemporary cloud settings, where the idea has developed into highly automated, container-focused platforms called cloud clusters.

The Fundamental Importance of Clustering

The necessity for high-performance, reasonably priced alternatives to pricey mainframe systems is what is driving the trend toward cluster computing. These systems are used by organizations to attain high availability, scalability, and superior processing speeds at reasonable costs. Moreover, clustering offers a hardware-neutral approach to parallel high-performance system implementation, guaranteeing consistent availability of computational capacity independent of vendor product choices.

You can also read What is Kube-Proxy in Kubernetes and it’s Lifecycle

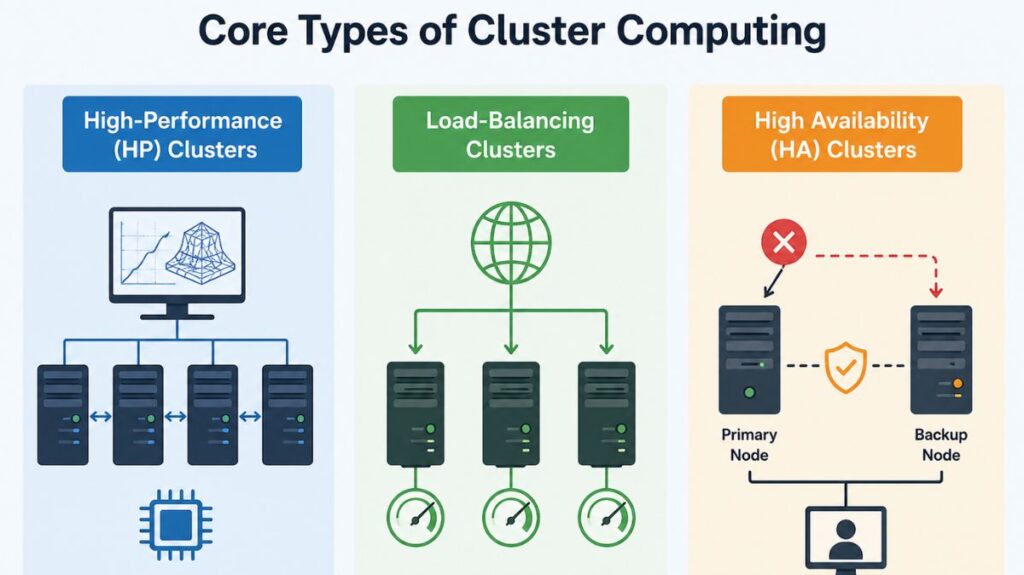

Core Types of Cluster Computing

Generally speaking, clusters are grouped according to their main operational goal:

- High-Performance (HP) Clusters: These are made to use the parallel processing capacity of several nodes to solve complex computational tasks. They are necessary for tasks that necessitate continuous communication between nodes as they are being carried out.

- Load-Balancing Clusters: These systems divide up incoming resource demands among several nodes that are executing related programs. They are perfect for web-hosting setups where traffic needs to be controlled effectively since this keeps any one node from becoming a bottleneck.

- High Availability (HA) Clusters: Designed to be resilient, HA clusters keep redundant nodes that act as backup systems. For vital services like sophisticated databases and e-commerce websites, they provide continuous data availability by enabling a proxy node to take over right away in the event that a primary node fails.

General Cluster Architecture and Classification

The architecture of a cluster is essentially made up of a variety of linked individual computers, or nodes, that function independently. Nodes can be single- or multiprocessor systems with memory, I/O, and operating systems. These nodes have high-speed interconnects, network switching hardware, and a cluster-specific OS.

Additional cluster classifications include accessibility:

- Open Clusters: Because each node is directly accessible over the internet and needs an IP address, there may be more security risks.

- Close Clusters: By concealing nodes behind a gateway node, more security is offered and fewer public IP addresses are needed. These work especially effectively for computationally demanding jobs.

You can also read Kubernetes Controller Manager vs Cloud Controller Manager

Modern Cloud Clusters: The Kubernetes Model

The industry standard for cloud-native infrastructure is Kubernetes. These clusters transition from host-centric to container-centric infrastructure, letting companies pool their hardware resources.

A cloud cluster’s operational integrity is preserved by a complex client-server architecture that is split into two main parts:

The Control Plane (The Brains)

Global decision-making and cluster event response fall within the purview of the Control Plane. Among its constituents are:

- API Server (kube-apiserver): The front-end interface for all communication is the API Server (kube-apiserver).

- Etcd: A highly accessible key-value store that serves as the “desired state” persistent record for the cluster.

- Scheduler: Based on resource limitations, it chooses the optimal worker node for a new container.

- Controller Manager: This conducts reconciliation loops to match the cluster’s state to the desired state (getting rid of a failed container).

- Cloud Controller Manager (CCM): Managing virtual networking and cloud-based load balancers with Cloud Service Providers (CSPs) requires the Cloud Controller Manager (CCM).

Worker Nodes (The Workforce)

Applications really execute on these physical or virtual devices. Every node has:

- Kubelet: An agent that makes sure containers operate in accordance with the requirements of the Control Plane.

- Kube-proxy: A network proxy that upholds container communication standards.

- Container Runtime: The program that initiates and terminates containers, similar to containerd.

You can also read What is Kubernetes Cloud Controller Manager?

Networking and Scalability in the Cloud

Dynamic networking is one of the most difficult problems that cloud clusters resolve. The system employs Services to provide consistent endpoints because containers (Pods) are transient and their IP addresses fluctuate. While an Ingress serves as a gateway for intricate HTTP/HTTPS routing, the LoadBalancer service type is commonly utilized in cloud environments to transport external traffic into the cluster nodes.

Robust and fast cloud clusters use automated scaling:

- Horizontal Pod Autoscaling (HPA): Automatically scales container replicas based on CPU use.

- Cluster Autoscaler (CA): If the cluster’s current nodes are full, Cluster Autoscaler (CA) dynamically adds more virtual computers.

To avoid a single point of failure, clusters operate three to five Control Plane nodes in production.

Managed vs. Self-Managed Clusters

Managed Kubernetes Services like Google Kubernetes Engine (GKE), Amazon EKS, and Azure AKS are preferred by many organizations over manual cluster installation on bare-metal servers using kubeadm. Cluster management provides:

- Speed: Web consoles enable quick provisioning.

- Reliability: The Control Plane’s high availability is managed by the provider.

- Automated Updates: Complex software patching and updates are automated by providers.

You can also read What is a Kubernetes Controller Manager?

Advantages, Disadvantages, and Applications

The benefits of cluster computing include

- High performance (often exceeding mainframe capabilities)

- Scalability

- Flexibility for upgrades,

- Ease of management.

But they have drawbacks, including

- Expensive hardware,

- Challenges in isolating faults across numerous components,

- The requirement for substantial infrastructure space as the network grows.

The applications are numerous in spite of these obstacles. Cloud clusters are employed to address challenging issues in:

- Astrophysics and aerodynamics.

- Predicting weather and earthquakes.

- Image rendering and data mining.

- Petroleum reservoir simulation and e-commerce.

Conclusion

Because cloud clusters give developers a uniform, infrastructure-agnostic layer, they have become the industry standard for building distributed applications. Kubernetes clusters enable organizations to increase the velocity, efficiency, and dependability of their software delivery pipelines by abstracting the complexity of computation, storage, and networking.

You can also read Kind: A Practical Guide to Local Kubernetes Clusters