What is Kubernetes?

The emergence of containerization caused a seismic change in ware development. Although the way developers packed apps and dependencies into portable pieces was first revolutionized by tools like Docker and Docker Swarm, as deployments expanded to include hundreds or even thousands of containers, new difficulties arose. Scalability, resource allocation, security, multi-cloud administration, and the requirement for rolling upgrades with no downtime become more complicated problems. Kubernetes, which serves as the “brain” or orchestrator that automatically maintains containerized apps at scale, was developed expressly to address these issues.

The Origins and Identity of Kubernetes

Kubernetes is an open-source platform for automating the deployment, scaling, and management of containerized services. It is frequently shortened to K8s, which stands for the eight letters between “K” and “s.” Because it was influenced by Google’s proprietary systems, Borg and Omega, its history is deeply ingrained in the company’s internal expertise. Kubernetes was first introduced in 2014. In 2015, it was donated to the Cloud Native Computing Foundation (CNCF), which is still in charge of maintaining it.

“Helmsman” or “pilot” is the meaning of the Greek term from which “Kubernetes” is derived. The platform’s function of navigating complicated applications across the choppy waters of contemporary infrastructure to guarantee their safe and effective arrival is aptly symbolized by this etymology.

From Monoliths to Microservices

The development of software architecture must be considered in order to comprehend the need for an orchestrator such as Kubernetes. Applications used to usually be monolithic, which meant that the whole codebase was connected and bundled as a single unit. This architecture presented serious risks; for example, redeploying the entire program was necessary to update a single module (like an e-commerce app’s payment system). Moreover, a small error in one area might possibly bring down the entire system.

Eventually, the industry shifted to microservices, an architecture in which every feature, such payments, alerts, and search, is developed and implemented separately. Although there was more flexibility and scalability as a result of this change, there was an additional management burden. Instead of administering a single huge application, companies were forced to manage thousands of little containerized services. Environments grew untidy and challenging to maintain in the absence of a central manager to coordinate these services. To supply the automated deployment, scalability, and coordination logic, Kubernetes intervened.

Kubernetes as an Orchestra Conductor

The metaphor of an orchestra conductor is a useful way to think about Kubernetes. According to this comparison, every container is a musician. A conductor is needed to organize a whole orchestra to execute a complicated symphony, whereas a developer can oversee a few individual members.

The developer gives Kubernetes the “sheet music” the intended configuration. The platform then makes sure that each “musician” (container) performs their role accurately. Kubernetes replaces sick musicians (a container fails); the conductor adds new players (scaling) if greater sound is required.

Understanding Cluster Architecture

A cluster, which is a collection of real or virtual machines (nodes) cooperating to run applications, is the fundamental mechanism by which Kubernetes functions. In a cluster, there are two main roles:

- Master Node (Control Plane): The “brain” of the whole process is the Master Node (Control Plane). It is in charge of making cluster-wide decisions, including scheduling the locations of applications and monitoring the condition of each component.

- Worker Nodes: The physical computers that operate the apps within containers are known as worker nodes, or the “muscles” of the cluster. The container runtime (such as Docker or containerd), the Kubelet (an agent that makes sure pods are operating as intended), and the Kube-proxy (which manages networking) are among the necessary technologies that are present on every worker node.

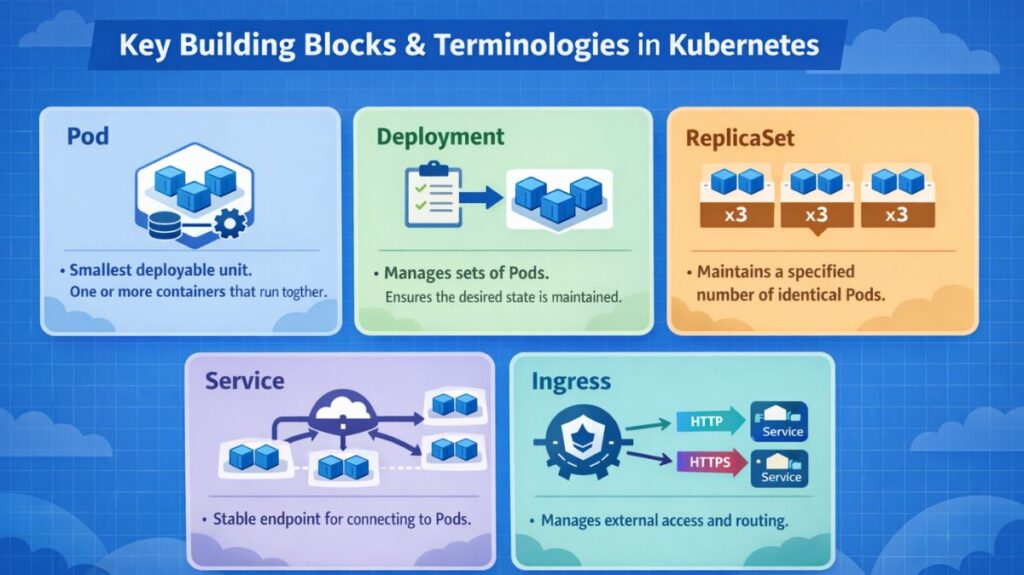

Key Building Blocks and Terminologies

Understanding a few specific words that describe how Kubernetes organizes and manages workloads is necessary to navigate the platform:

- Pod: The K8’s smallest deployable unit. One or more containers that must operate together can share network and storage resources by being wrapped in a pod.

- Deployment: A high-level entity that controls groups of Pods. Declarative updates enable you to specify the desired state, and Kubernetes strives to reach and preserve that state.

- ReplicaSet: This part operates in the background to guarantee that a predetermined number of identical Pods are active at all times.

- Service: Due to the continuous creation and destruction of Pods, their IP addresses frequently fluctuate. Regardless of these fluctuations, a service offers a reliable endpoint for Pods to connect with each other.

- Ingress: This controls external access to services and usually acts as a reverse proxy while offering HTTP and HTTPS routing.

Configuration, Security, and Storage

Additionally, Kubernetes offers reliable techniques for handling the information and configurations needed for applications to run:

- ConfigMap: This enables developers to keep non-sensitive configuration parameters apart from the application code, such as database connection strings or API keys. Because of this division, it is possible to adjust settings without altering the program.

- Secret: Sensitive data, such as passwords or tokens, are kept and managed securely within the cluster by using a secret, which is similar to a ConfigMap.

- Persistent Volume (PV): When a container restarts, data is frequently lost in a typical container system. Data longevity is ensured via a Persistent Volume, which offers a portion of storage that endures even when a Pod is deleted or restarted.

The Powerful Features of K8s

Because of its extensive set of automation tools, Kubernetes has become widely used by major cloud providers.

- Automated Scheduling: Using the resources at hand, K8s determines which nodes are best for hosting containers.

- Self-Healing: When a container crashes, Kubernetes automatically reschedules, replaces, or restarts it without the need for human intervention.

- Rollouts and Rollbacks: In the event that an update is unsuccessful, the platform can swiftly roll back to a prior version of an application.

- Scaling and Load Balancing: Kubernetes has the ability to grow applications horizontally in order to manage spikes in demand and distribute that traffic uniformly to sustain performance.

- Resource Optimisation: Resource optimization makes ensuring that hardware resources are used as effectively as possible by keeping an eye on the cluster.

Kubernetes lives up to its moniker as the helmsman of the contemporary cloud-native world by automating these intricate operational duties, freeing developers to concentrate on building code rather than maintaining infrastructure.